“Our next-generation data center design will support our current products while enabling future generations of AI hardware for both training and inference. This new data center will be an AI-optimized design, supporting liquid-cooled AI hardware” — Meta announcement, May 18, 2023

We’ve all heard about AI taking over the world, in a thousand different ways. Whether we’re talking about artificial narrow intelligence, machine learning, or the latest source of excitement, generative AI, it’s clear that we’re now living in a world where AI is becoming a prominent part of our day to day lives.

- An overwhelming majority (95%) of companies now integrate AI-powered predictive analytics into their marketing strategy, including 44% who have indicated that they’ve integrated it into their strategy completely.

- 43% of college students say they have experience using AI tools like ChatGPT.

- Autonomous artificial intelligence is being widely used in manufacturing and transport to accelerate production and distribution.

As AI adoption accelerates and new use cases emerge, those of us who design, build, and operate the underlying digital infrastructure are presented with new challenges.

What is it about AI that demands a different infrastructure?

Two issues come to mind. The first is architecture.

AI training infrastructure is very unlike cloud infrastructure, because AI training is a synchronous job — all elements in the cluster are working together, in concert, at high intensity, on a single service. The size of an AI training cluster is enormous — at least 5,000 servers, and probably more than 10,000 servers, each server with several CPUs and GPUs.

Since training is synchronous, the backend network, that connects all the servers in a cluster, is incredibly important. Issues with backend bandwidth and latency, whether caused by distance or a component failure, can seriously affect the performance of a cluster. So in order to maximize performance, AI infrastructure designers want to keep the servers as physically close to one another as possible, as physically long interconnections increase latency and are prone to being disconnected.

In other words, they want density. They want as many servers as possible in a rack. However, our industry, designed to support enterprise workloads and cloud, isn’t well-suited to provide them with the intensive density they demand. The Uptime Institute tells us that 75% of operators don’t support more than 20kW per rack.

AI training servers can consume up to 4kW…each.

Five servers per rack = 20kW per rack. 10,000 servers / 5 servers per rack = 2,000 racks.

That’s too many racks, too far apart from one another, and that’s not good enough. Operators have to support greater densities to support AI. At a bare minimum, designers and operators will have to support more power distribution, utilization, heat generation, and heat removal, in each rack, to cope with the densities needed.

And that leads us to the second problem. Energy…and heat.

According to Digital Information World, “AI model training in data centers may consume up to three times the energy of regular cloud workloads, putting a strain on infrastructure.”

This isn’t a trivial problem. If AI consumes three times the power of cloud, it produces three times the heat — and today’s data centers, almost all air cooled, simply aren’t designed for the new levels of power distribution and heat removal we’ll need to support widespread AI adoption.

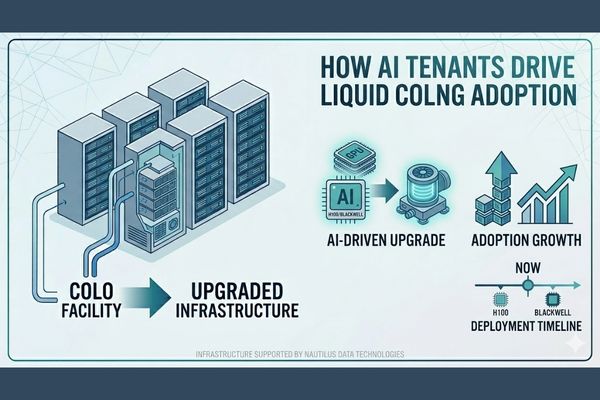

Right now, according to the stat above, at least 75% of operators are nowhere NEAR ready to support the density, energy, and heat demands of AI. They simply cannot do the job with today’s designs. The industry is waking up to the possibility that thousands of data centers are essentially obsolete, today.

But there are two other factors that will make matters even worse.

- Generative AI models are rapidly becoming multi-modal (training with, and using, and creating video, audio, speech, images, and text simultaneously). Moving past text-only generative AI to AI that can absorb, integrate, and generate rich content (music, audio, and video), we’ll see an exponential increase in the need for more servers, more GPUs, more storage capacity, and more bandwidth.

- We know that tomorrow’s servers will be hotter than before. The general-purpose processors will consume more power, each chassis will have more specialized silicon, and we’ll see designs pushing 6kW, 8kW, and beyond.

So what we have is a perfect storm. More demand for AI, more demanding AI, more density, more power, more heat…and more problems.

And our data center operators, struggling to support 20kW per rack, are being approached by enterprises, governments, and hyperscalers, and asked “Can you support densities of 80kW per rack?”

If the answer is no…the operators lose out on multi-million dollar sales.

What can the industry do to cope?

Liquid cooling is the only way forward. And it’s not just Meta saying so.

The server manufacturers see the need for liquid cooling. Charles Liang, CEO of Supermicro, recently wrote that “We hope and anticipate that 20 percent or more of worldwide datacenters will need to and will move to liquid cooling in the next several years to efficiently cool datacenters that use the latest AI server technology.”

And leading-edge data center providers see the need too. Equinix is already using liquid cooling for production workloads, and specialist data center companies, including Nautilus, are showing the world what can be done.

Today’s liquid cooling technologies, whether direct to chip or immersion cooling, are the only feasible technologies that address the density, energy, heat production, and growth of AI. Liquid cooling improves:

- Density. Today’s solutions can support as much as 80kW per rack, allowing AI infrastructure designers to support more servers per rack and shorter interconnects for each server in the cluster.

- Cooling system energy utilization. Water is 23 times more efficient at transporting air than heat. That added efficiency makes heat rejection much more energy efficient. Energy can be reallocated to additional servers or other hardware.

- Reliability. Liquid cooling reduces or eliminates hot spots in data centers, a principal cause of hardware failure. Failing components are a serious problem in AI training, so reduced failure rates are a substantial advantage.

- Footprint. Instead of needing 2,000 racks for an AI cluster, liquid cooling might cut the footprint to 500 racks. That reduction in floor space has implications for site selection. and overall data center utilization.

- Sustainability. Smaller sites, better energy efficiency, and reduced failure rates all contribute to sustainability.

It’s indisputable, liquid cooling IS better for AI. And though companies like Meta, Supermicro, and others have the engineering expertise to design their own liquid cooled data centers, not everyone does. Many enterprises and governments need a trusted partner to help them navigate the journey from air cooled to liquid cooled.

That’s where Nautilus comes in.

Our data center designs are liquid cooled native. Our data center cooling system uses any source of water, both freshwater and marine, to remove heat from the data center. We support all forms of liquid cooling inside the data center and have partnerships with a number of leaders. Our capacity to rapidly build liquid cooled data centers around the world, more efficiently than others, gives us a capacity to help organizations move forward faster into the world of AI.